SJVN and the fragile bazaar

In a recent opinion column in The Reg, SJVN describes a severe problem that open-source software is facing today, namely the burnout of the (unpaid) software developers: Feeling the burn: When open source developers decide to take a break. Apparently,

workaholism in open source isn’t a personal quirk of a few over‑committed hackers. It’s a structural pattern baked into how modern OSS is funded, consumed, and celebrated.

He cites Eric S. Raymond’s “Homesteading the Noosphere” and “The Cathedral and the Bazaar” as the Constitution and the New Testament of open source. Meanwhile, the situation only worsened.

Unfortunately, “just take a break” cannot fix anything. This is not the first time that an experienced technology journalist like SJVN has written an opinion without having one.

But I do have an opinion on today’s open-source software.

All software, both closed-source and open-source, is now incredibly complex, much more than it was in the 1990s. This requires much more workforce to maintain it and an insane amount of man-hours.

At the same time, not all software that exists was strictly necessary. Most open-source projects were indeed born out of passion, so they appeared as alternatives to existing projects: libraries, frameworks, languages, Linux distributions or operating systems, desktop environments, application software. This has created a level of complexity that could have been avoided.

Moreover, such open-source enthusiasts behave like those rabbits from a Duracell advertisement or like highly functional ADHD kids: they often “get bored” when their product (a library, end-user software, etc.) reaches stability and only evolves incrementally, if at all. So they want to rewrite everything from zero, to change the metaphor, to rebase the software on different libraries or technologies, or merely to create a new version that breaks backwards compatibility.

Such behavior can also be encountered in closed-source software, but it’s prevalent in the open-source community.

Things got out of control.

In most cases, the maintainers of such open-source software aren’t paid at all. Exceptions exist, with major projects (the Linux kernel, GNOME, etc.) being sponsored by major corporations, who also employ some of the respective developers.

But the fact that the entire scaffolding of the software on Earth relies on hundreds and thousands of small open-source projects that are maintained by a handful of software developers is a major security and business continuity vulnerability.

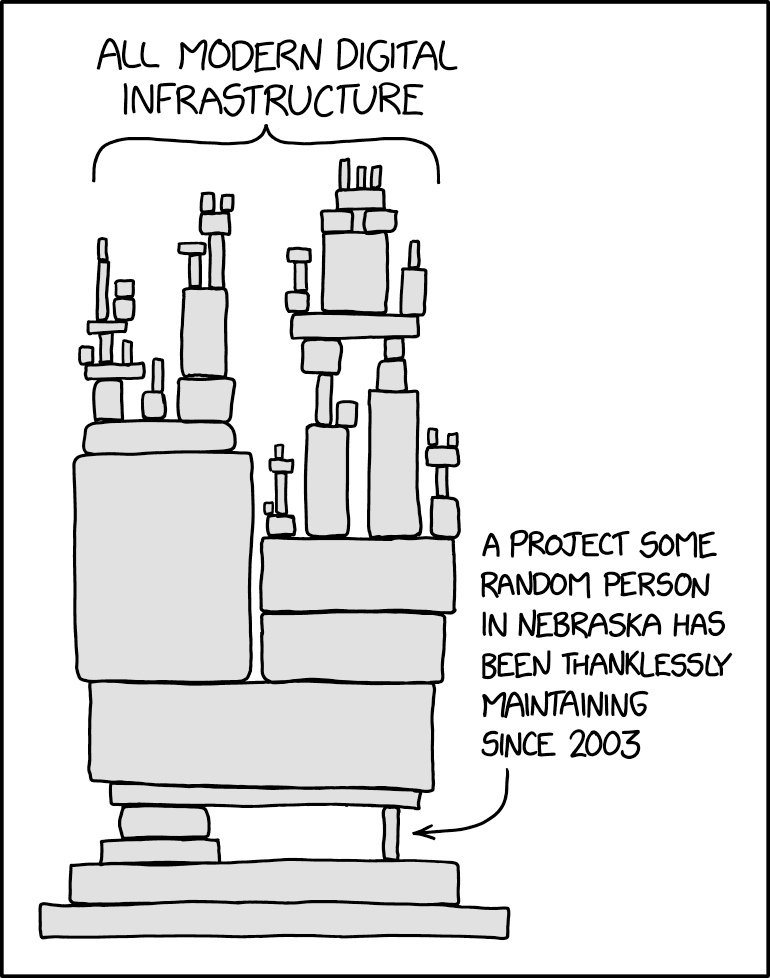

The classic reference: xkcd: Dependency or

Take, for instance, Red Hat Enterprise Linux, a commercial product based on open-source software. Suppose it’s used by the military and other mission-critical entities. With all the best efforts from Red Hat, if several open-source components became without a maintainer, the entire OS is at risk. And RHEL is supported only for the limited amount of software included in the distro, not for EPEL and other software that’s not offered by Red Hat. And yet, there is software out of anyone’s control.

I would suggest the following scenario if we’re to care about our future.

Critical open-source software should be identified, preferably with alternatives (such as, both GNOME and KDE should be deemed “critical”). A consortium of IT companies should be tasked with making a list of such software.

For each such project, a secure path with regard to maintainability, security, and business continuity should be designed. There are, IMHO, two possible paths:

- Such critical projects should be completely adopted by a commercial entity under a statute that would prevent them from becoming closed-source or discontinued. GPL’ed software should ensure that the sources remain publicly available. Commercial licenses with strong SLAs or other services should be possible.

- Such critical projects should benefit from enough financial support that the risk of capsizing is eliminated. The aforementioned consortium could dedicate a number of paid software developers to the respective projects.

Only in such conditions could we have open-source operating systems, open-source databases, open-source frameworks, and so on that we could trust.

On the other hand, this would split the world of open-source software in two: the part with strong support and the part that targets the enthusiasts, the “hackers,” and the tinkerers. But, IMO, it’s the only path to sustainability. Otherwise, all open-source software risks following the path of the ~200 Linux distros that died, of the desktop environments that are as good as dead or in danger, or of the so many abandoned projects that used to be great but now only a few of us still remember them, and so on.

What do you think?

Here’s Grok’s answer (version 4.20 Beta):

I think you’re spot on with the diagnosis, and your proposed fix is one of the more realistic paths forward I’ve seen. The open-source world has scaled from a fun hacker gift economy (ESR’s “Homesteading the Noosphere” and “The Cathedral and the Bazaar” nailed the 1990s vibe perfectly) into the invisible scaffolding of literally everything—and the scaffolding is held together with duct tape and the goodwill of a few exhausted volunteers. SJVN’s column today captures the human side of it exactly: workaholism isn’t a bug, it’s the default mode because the economics, culture, and expectations never grew up with the software’s importance.

The xkcd 2347 comic you linked is the perfect visual summary—no one has said it better in 15 years.

The problems you outlined are real and worsening

- Complexity explosion + unnecessary duplication: Modern stacks are comically over-engineered compared to the 90s. Every language has 5–10 competing web frameworks, every niche has three “lightweight” alternatives that aren’t, and the rewrite-from-scratch itch (boredom + “this time it’ll be perfect” syndrome) is very real in OSS. Closed-source does it too (see: every major rewrite at big tech), but OSS celebrates it as “innovation.” Result: maintenance debt multiplies, backward-incompatibility becomes sport, and users get fragmentation fatigue.

- Burnout as structural, not personal: Recent data backs this hard. Tidelift surveys (2024–2025) show ~60% of maintainers unpaid, 60% have considered quitting, 44% cite burnout severe enough to walk. Kubernetes Ingress NGINX retired in late 2025 not from lack of users but from maintainer exhaustion. cURL’s Daniel Stenberg killed the bug-bounty program because of AI-generated “slop” PRs. Asahi Linux lost key people. The Raymond-era assumption (“we code for fun, reputation is the currency”) doesn’t survive when your library is in every phone, server, car, and military system.

- Supply-chain single points of failure: Exactly the xkcd tower. One burned-out dev in Nebraska (or wherever) can take down huge chunks of the internet. The XZ Utils near-miss in 2024 and endless Log4j-style scares prove it. RHEL/EPEL can’t insure the whole universe; neither can any distro.

Your two-path solution for critical projects makes sense

Identify the foundational layer (kernels, core libs, major DEs, databases, crypto libs, container runtimes, etc.) via a broad consortium (Linux Foundation + OpenSSF + big vendors + governments already do versions of this—see the Open Source Security Foundation’s “critical projects” work and Germany’s Sovereign Tech Fund). Then:

- Commercial adoption route (with ironclad GPL/copyleft enforcement) — This already works well for some (Red Hat + CentOS Stream/Fedora model, Canonical + Ubuntu, SUSE). Companies get SLAs, support contracts, and liability shielding; the code stays open and forkable. The risk of “going closed” is low if the license is enforced and community oversight exists.

- Dedicated funding route — Paid maintainer programs (Rust Foundation’s new one, CNCF, Eclipse, Apache) or consortium-pooled engineers. Data shows paid maintainers fix vulns 3× faster, adopt security practices 55% more, and leave far fewer unfixed issues.

This does create a two-tier OSS world: “enterprise-grade critical infrastructure” (boring, stable, funded, SLA-backed) vs “hacker/tinkerer/experimental” (fast-moving, fun, high churn, high risk). That’s not a bug—it’s maturity. The hobby tier is where the next big ideas come from (Rust, Zig, new DE experiments, etc.). The critical tier just needs to not collapse.

A few additions/caveats from the current landscape (2025–2026)

- It’s already happening piecemeal: Linux Foundation, OpenJS, CNCF, OpenSSF, and government funds (EU proposals for a broader Sovereign Tech Fund, German STF investments) are doing the identification and funding. GitHub just shipped new maintainer tools (Feb 2026) to throttle PRs and reduce noise. Some projects (Vue, Homebrew, etc.) have professionalized with sponsorship tiers and strict boundaries.

- Corporate responsibility needs teeth: Big tech uses trillions of dollars worth of OSS but often contributes pennies. Mandated “pay-to-play” percentages (of revenue or engineer time) or procurement rules requiring SBOMs + maintainer-health scores would accelerate things without a single central “OSS Politburo.”

- Cultural shift required: “Just take a break” is insufficient, as you said. We need to normalize saying “no” to entitled issues/PRs, celebrate maintenance as heroic (not just shiny new features), and treat maintainers like the infrastructure engineers they are. AI is making this worse (slop PRs) but could help (auto-triage, security scanning).

- Risks of your model: Defining “critical” invites politics and capture. A consortium dominated by the usual suspects (Google, Microsoft, Amazon, Red Hat) might deprioritize things that threaten their interests. Innovation could slow in the funded tier. But the alternative—hoping volunteers keep the world running—is clearly failing.

Bottom line: Open source won. It powers the planet cheaper and more securely than the proprietary alternative ever could have. But winning exposed the model’s limits. Treating the critical 5–10% as actual public infrastructure (funded, professionally maintained, still open) while letting the long tail stay the chaotic bazaar is the only sustainable way forward. Your framing—split the world, professionalize the foundation—is pragmatic and honest. The alternative is more burned-out maintainers, more abandoned critical projects, and more “wait, that tiny library was holding up the entire internet?” moments.

We’re not there yet, but the conversation has finally moved from “OSS is magic and free” to “OSS is infrastructure and needs real economics.” That’s progress. Thanks for laying it out so clearly.

Why couldn’t SJVN suggest any such plan? Should we replace SJVN with a chatbot?

Leave a Reply