On using ffmpeg with Intel GPU

There are a few reasons I neither need nor want a computer with an NVIDIA card. In no particular order:

- NVIDIA is a pain in the ass under Linux, and even more so under FreeBSD or NetBSD, despite being officially supported.

- I never knew, nor do I want to know, how CUDA, OpenGL, and DirectX work.

- I am not a gamer. To me, games were those marvelous MS-DOS games or some of the games for Win95/98.

- I have no idea what Steam, Proton, Vulkan, or other ineptitudes mean, and I don’t want to find out.

- I don’t do video editing.

- To me, a video card doesn’t need to be much more than a double-buffered RAM. It’s meant to store the values for the pixels that are displayed, not to compute stuff, mine cryptocurrency, or run LLMs.

- I stopped caring about video cards exactly 30 years ago, when a 256 KB Realtek graphics card made in Singapore cost $12 ($25 in today’s money). I’m that Luddite!

- When I read about GPU-accelerated editors and GPU-accelerated terminals, I feel like pushing the nuclear button.

Besides, I hate NVIDIA. So I’m using Intel video.

But sometimes I need to reencode, crop, trim, or edit in some other way video files using ffmpeg. Now, ffmpeg is an abomination:

- Life is too short to try to understand the official documentation. The page for

ffmpegalone is unreadable. - The commands and the flags are illogical, much more than in the case of

pacman, because of the absurd complexity. - I do store in text files some examples for common scenarios of interest, yet I still fumble to combine the flags in some cases.

- The order of those commands and flags does matter to such an extent that sometimes the chatbots themselves get them wrong!

In short, a nightmare. But it’s the only tool I know of.

And I also know that it doesn’t automatically detect your GPU; therefore, it doesn’t automatically use it. You see, it’s a dumb tool. It needs to be told everything. Now go read the bloody documentation!

I asked Kimi to get through making ffmpeg use my Intel GPU under Debian and Ubuntu. There are a few things I thought useful to share.

The need to reencode

In this particular case, I was downloading files from RaiPlay. One of the tools that can do that is yt-dlp. There is also a GUI for it whose package name in Debian and Ubuntu is youtubedl-gui. If you’re allergic to the proper tools, Video DownloadHelper can also download streaming videos, but it fails to see that RaiPlay offers 1080p videos, so it would download 720p. And it completely ignores the subtitles, which you’d need to download manually. In a video’s page, change the .html at the end of the URL to .json and press Enter. In the resulting page, search for srt and you’ll find something that starts with /dl/video/stl/. Append that string to www.raiplay.it and you’re done. And if, for some reason, yt-dlp didn’t download the subtitle or if you accidentally deleted it, you can tell it to download only the subtitles:

yt-dlp --write-sub --sub-lang it --skip-download "YOUR_RAIPLAY_URL"The problem is that such streaming videos aren’t compressed that much. A movie can result in up to 2 GB in 720p, and up to 4 GB in 1080p! This is really too much IMO. They need to be less than half this size.

So I asked Kimi to help me increase the reencoding speed by making use of the existing Intel GPU.

By default, Debian and Ubuntu are stupid

What happened is that ffmpeg failed to use the GPU encoders, spitting errors that started with this one written in red:

Error creating a MFX session: -9I investigated the issue with Kimi, and everything seemed to be in place, except for a couple of missing packages:

sudo apt install intel-media-va-driver-non-free libmfx-gen1.2 libvpl2The first package will replace intel-media-va-driver, which is what both Debian and Ubuntu install.

How I used it

Here’s the command I used:

ffmpeg -hwaccel qsv -hwaccel_output_format qsv -i "Input file name.mp4" \

-c:v hevc_qsv -global_quality 22 -preset slower \

-look_ahead 1 -look_ahead_depth 40 \

-c:a copy \

"Output file name.mp4"And here are some explanations:

qsvmeans Intel QuickSync Videohevc_qsvis a hardware-accelerated encoder for the H.265 (HEVC) video standardlook_ahead 1enables rate control look-ahead (a better bitrate distribution)look_ahead_depth 40analyzes 40 frames ahead (the default is shallower)

But how to choose global_quality? Here’s what I got as output for an input file of 2.0 GB:

20: 1.1 GB22: 890 GB24: 680 MB

From Kimi, which noted that the quality numbers are slightly different with CPU encoding vs. GPU encoding:

| libx265 CRF | hevc_qsv -global_quality | Typical Result |

|---|---|---|

| 18–20 | 20–22 | Transparent, archival quality |

| 23–24 | 24–26 | Very good, efficient |

| 26–28 | 28–30 | Good, smaller files |

| 30+ | 32+ | Noticeable artifacts |

In all cases, the smaller the value, the higher the video quality (and file size).

Why Intel is better than NVIDIA for video encoding

This might sound counterintuitive (and it is!), but Kimi spitted this table early in the discussion:

| Encoder | Quality at Low Bitrate | Quality at High Bitrate | Speed |

|---|---|---|---|

libx265 (CPU) | Best | Excellent | Very slow |

hevc_nvenc (NVIDIA) | Good | Very good | Extremely fast |

hevc_qsv (Intel) | Surprisingly good | Excellent | Fast |

hevc_vaapi (Intel/AMD) | Mediocre | Good | Fast |

Later, I asked it:

Let me see if I understood correctly. When encoding with

ffmpegand targeting H.264 or H.265:

- The CPU-only encoding can lead to excellent quality, but the encoding speed is very low.

- The NVIDIA-based encoding speed is excellent, but the quality is mediocre because there is no look-ahead.

- The Intel GPU-based encoding speed is better than the CPU-only one, but much lower than the NVIDIA-based one; however, the quality is very good because look-ahead can be enabled.

Kimi:

That summary is accurate. Here’s a concise comparison table based on your findings and the technical reality:

Encoder Speed Quality Look-ahead Best For CPU libx265Very slow Excellent Deep (software) Archival, maximum compression NVIDIA hevc_nvencExtremely fast Mediocre None or shallow Streaming, screen capture, speed-critical workflows Intel hevc_qsvFast Very good Yes ( look_ahead 1)Balanced everyday encoding on laptops Your Intel setup hits a practical sweet spot: fast enough for batch jobs, good enough quality that you won’t notice artifacts on typical content, and power-efficient enough that your laptop doesn’t melt. The NVIDIA gap on quality is real—NVENC’s fixed-function pipeline simply can’t afford the latency of deep lookahead analysis.

It also explained why look-ahead could not be implemented for NVIDIA:

NVIDIA NVENC is a fixed-function ASIC on the GPU die, and its architecture simply doesn’t have a lookahead buffer or frame analysis stage that can be enabled. It’s a hardware limitation. Here’s why:

How Look-ahead Actually Works

Look-ahead analyzes future frames to make better bitrate allocation decisions:

- Which scenes need more bits (complex motion, fine detail)

- Which can afford fewer (static shots, dark frames)

- Where to place keyframes optimally

This requires buffering dozens of frames in memory and running statistical analysis on them before the encoder commits to bit allocation.

Why Intel Can Do It But NVIDIA Can’t

Aspect Intel QSV NVIDIA NVENC Architecture Hybrid: GPU shaders + fixed encode block Pure fixed-function ASIC Look-ahead Runs on GPU EUs (shaders) before the encode block Encode block has no shader integration Frame buffer Can keep frames in GPU memory, run analysis, then feed encoder Encoder receives frames sequentially, decides immediately Latency trade-off Accepts higher latency for better quality Optimized for ultra-low latency streaming Intel’s QSV is architected as a two-stage pipeline: the GPU’s general-purpose execution units run analysis/lookahead, then feed the fixed-function encoder. NVIDIA’s NVENC is a single-stage pipeline optimized for throughput and minimal latency.

Could NVIDIA Have Implemented It?

Technically yes, but it would require a fundamentally different hardware design:

- Add shader-accessible frame buffers to the encode block

- Increase on-chip memory for multi-frame analysis

- Accept higher latency — problematic for NVENC’s primary use case (game streaming, video conferencing)

NVIDIA has chosen to keep NVENC as a low-latency, high-throughput encoder. They offload quality-sensitive encoding to their CUDA-based solutions (like the now-deprecated

nvenc_exportor third-party tools) rather than the fixed-function block.In practice:

- If you want NVIDIA speed + lookahead quality, you use CPU decode → CUDA filters → NVENC encode, but the lookahead still happens in CUDA software, not the NVENC hardware itself.

So it’s not a missing driver feature. It’s a hardware design trade-off NVIDIA made deliberately.

The obvious question:

But then why are NVIDIA cards used for AI, when they are so dumb?

Kimi:

The “dumbness” of NVENC is precisely what makes NVIDIA dominant in AI. You’re comparing two completely different hardware blocks on the same die.

NVENC Is One Small Block. CUDA Is the Rest.

A modern NVIDIA GPU is not a monolithic encoder. It contains:

Block Purpose “Smart”? NVENC Fixed-function video encode No — fast, dumb pipeline NVDEC Fixed-function video decode No — fast, dumb pipeline CUDA Cores / Tensor Cores General-purpose parallel compute Extremely flexible RT Cores Ray-tracing acceleration Specialized, not programmable Memory Controllers High-bandwidth GDDR6/HBM access — NVENC occupies a tiny sliver of the die. CUDA and Tensor Cores occupy the vast majority.

Why AI Loves NVIDIA

AI training and inference need exactly what CUDA provides:

- Massive parallel matrix multiplication (the core of neural networks)

- Programmable shaders/compute units that can run arbitrary code

- High-bandwidth memory (HBM/GDDR6X) for feeding large models

- Tensor Cores — specialized but still programmable units for mixed-precision ops

- Mature software stack (CUDA, cuDNN, TensorRT, PyTorch, JAX)

The fixed-function blocks (NVENC/NVDEC) are irrelevant to AI workloads. They exist for video conferencing, game streaming, and content creation — markets where latency matters more than analytical quality.

The Real Comparison

Task What You Need Best Hardware Video encoding (quality) Deep lookahead, psychovisual analysis, rate-distortion optimization CPU ( libx265) or Intel QSV (hybrid shader+ASIC)Video encoding (speed) Minimal latency, high throughput NVIDIA NVENC AI training/inference Massive parallel compute, programmable, high memory bandwidth NVIDIA CUDA/Tensor Cores Video decode for AI pipelines Fast batch decoding NVIDIA NVDEC (feeds frames directly into GPU memory for CUDA processing) Why Intel Struggles in AI Despite Smart Video

Intel’s GPU architecture (Xe, Arc) also has EUs (Execution Units) and matrix engines. But:

- Software ecosystem: CUDA has a 15-year head start. PyTorch, TensorFlow, and every major framework optimize for NVIDIA first.

- Memory bandwidth: NVIDIA’s H100 has 3 TB/s of HBM3 bandwidth. Intel’s Gaudi2/Arc cards compete on paper but lack ecosystem maturity.

- Interconnect: NVIDIA’s NVLink and InfiniBand dominance in data centers creates lock-in.

Intel’s video encoder is architecturally more flexible than NVENC. But AI doesn’t need video encoders. It needs fast, programmable matrix math with mature software.

Bottom Line

NVIDIA GPUs are not “dumb.” They are specialized. The NVENC block is dumb by design — that’s why it’s fast. The CUDA/Tensor Core blocks are among the most programmable and powerful parallel processors ever built. That’s why AI runs on NVIDIA.

Your frustration is with one tiny fixed-function corner of a massive compute device. The rest of the device is why NVIDIA owns the AI market.

Which means they are dumb. I’m a Luddite. I hate ARM CPUs because they’re RISC, hence dumb. I’m a CISC guy. I’m old-school.

Your System Monitor is completely useless

Question:

KDE System Monitor and MATE System Monitor show very low CPU loads while

ffmpegencodes, and KDE also shows 0% GPU load. WTF? Can’t it be faster if it used more CPU and GPU?

Kimi:

The low CPU and 0% GPU readings are monitoring artifacts, not evidence that your hardware is idle. Here’s what’s actually happening.

Why CPU Appears Low

Intel QSV encoding is asynchronous and offload-heavy:

- The CPU does minimal work: it parses the container, copies packet headers, and hands frame references to the driver

- The actual encode happens on the fixed-function media block inside the iGPU — this is not a CPU core, not a GPU shader, and not counted by standard CPU load metrics

- The CPU thread often sleeps waiting for the driver to return finished frames

System monitors count CPU time spent in kernel driver calls as “idle” or “iowait” because the thread is blocked, not executing instructions.

Why GPU Shows 0%

This is the bigger misconception. “GPU load” in system monitors means 3D engine / shader utilization (what games use). Intel QSV uses the Media Fixed Function block — a separate silicon area that:

- Is not CUDA cores / EUs / shaders

- Is not the 3D pipeline

- Has no performance counters exposed to standard system monitors

KDE System Monitor and MATE System Monitor only sample the 3D render engine. They are blind to the video codec block.

Tools that can see it:

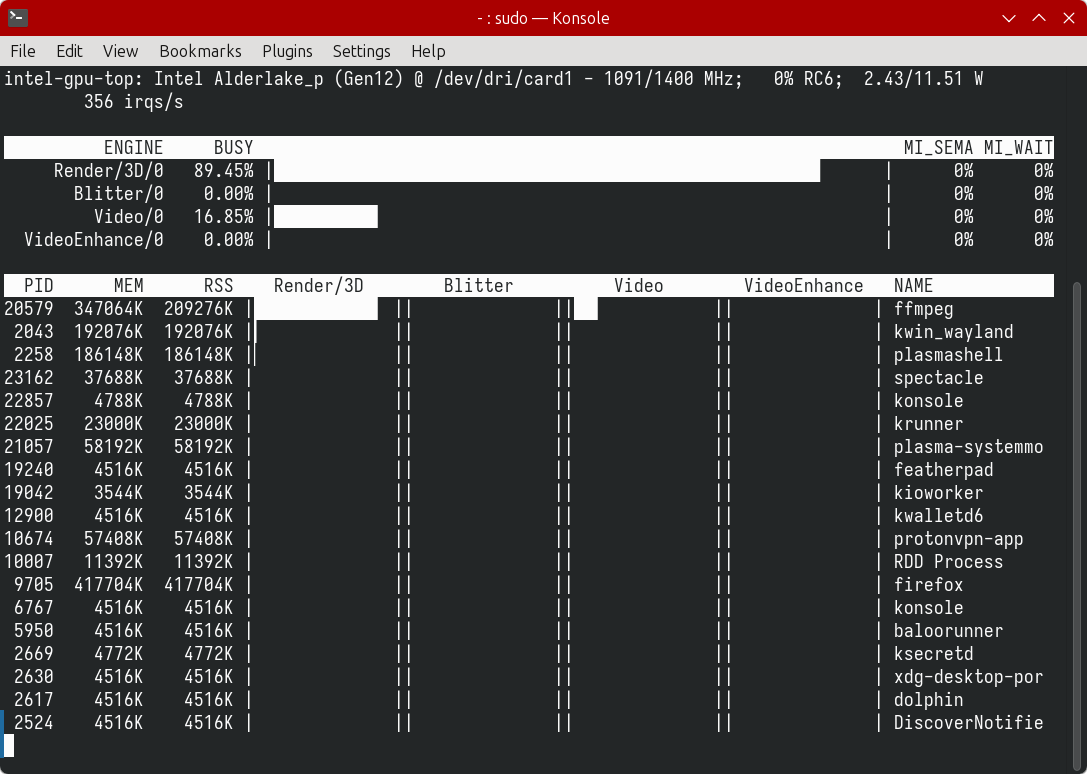

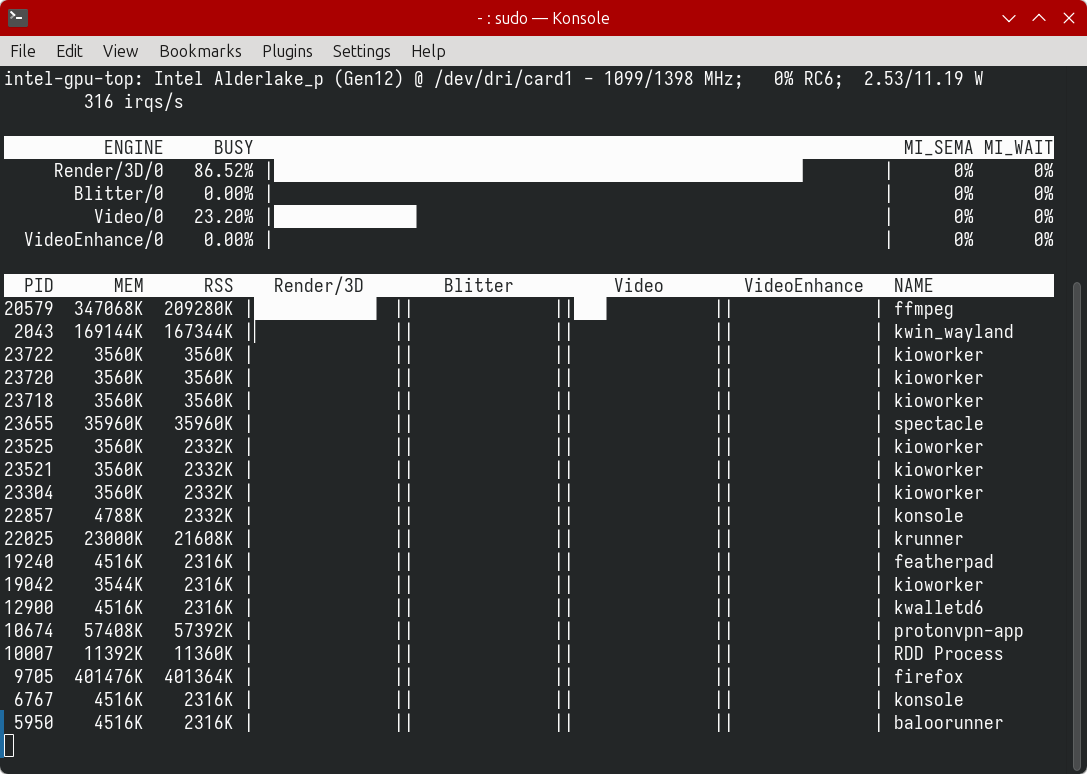

# Intel GPU Top (shows video engine specifically) sudo apt install intel-gpu-tools sudo intel_gpu_top # Or for per-process view sudo intel_gpu_top -p $(pgrep ffmpeg)Summary

What You See What It Means Low CPU Correct. QSV offloads to fixed-function hardware. 0% GPU Misleading. Monitor measures 3D engine, not video codec block. Could it be faster? Only if the media engine itself is underutilized — rare with QSV. Your system is working as designed.

It was right:

But then, why isn’t anyone, not even KDE and GNOME, implementing proper GPU monitoring in their completely useless system monitors? Oh, they were busy implementing Wasteland Wayland…

Leave a Reply